Thursday, December 8, 2016

Your Feed Expired - Please Upgrade Your Account

from

https://rssmixer.com/register?utm_medium=rss_feed&utm_source=3785&utm_campaign=migration&utm_content=item-link

Wednesday, November 9, 2016

SearchCap: Google mobile index, move to Canada & repeat offender penalties

Below is what happened in search today, as reported on Search Engine Land and from other places across the web.

The post SearchCap: Google mobile index, move to Canada & repeat offender penalties appeared first on Search Engine Land.

from

http://searchengineland.com/searchcap-google-mobile-index-move-canada-repeat-offender-penalties-262936

See how Google is showing even more Shopping Ads on desktop this season

On product queries, product listing ads are dominating over text ads.

The post See how Google is showing even more Shopping Ads on desktop this season appeared first on Search Engine Land.

from

http://searchengineland.com/see-google-showing-even-shopping-ads-desktop-season-262896

‘Tis the season: 6 ways to prepare for holiday shoppers

Whether you've got your holiday marketing plan all mapped out or are just starting now, columnist Christi Olson has tips to prepare your search campaigns for the season.

The post ‘Tis the season: 6 ways to prepare for holiday shoppers appeared first on Search Engine Land.

from

http://searchengineland.com/tis-season-6-ways-prepare-holiday-season-262623

FAQ: All about the Google mobile-first index

Here's everything we know about the Google mobile-first index.

The post FAQ: All about the Google mobile-first index appeared first on Search Engine Land.

from

http://searchengineland.com/faq-google-mobile-first-index-262751

As Trump racked up Electoral College votes, searches about moving to Canada spiked

Briefly overwhelmed by traffic from the US, the Canadian immigration website was also down last night.

The post As Trump racked up Electoral College votes, searches about moving to Canada spiked appeared first on Search Engine Land.

from

http://searchengineland.com/trump-racked-electoral-college-votes-searches-moving-canada-spiked-262862

Google publishes best practices for search engine friendly Progressive Web Apps (PWAs)

Google publishes their first recommendations for making PWAs indexable in Google.

The post Google publishes best practices for search engine friendly Progressive Web Apps (PWAs) appeared first on Search Engine Land.

from

http://searchengineland.com/google-publishes-best-practices-search-engine-friendly-progressive-web-apps-pwas-262871

Google to fix bug where small sitelinks don’t show up in the search results

Google said they fixed the bug where small sitelinks were not showing up in the search results.

The post Google to fix bug where small sitelinks don’t show up in the search results appeared first on Search Engine Land.

from

http://searchengineland.com/google-fix-bug-small-sitelinks-dont-show-search-results-262863

Are you optimizing your off-site content?

In our last post, we looked at how to measure the true performance of your content, both on-site and off-site, through the tracking of engagement. Today, we want to discuss how to improve the discoverability of your off-site content in search, so it can be found in the first place. When marketers think of […]

The post Are you optimizing your off-site content? appeared first on Search Engine Land.

from

http://searchengineland.com/optimizing-off-site-content-262516

Tuesday, November 8, 2016

Google Safe Browsing “Repeat Offenders” will get a 30-day time-out

Do you continue to violate Google's safe browsing policies? Well, Google is about to get stricter on you.

The post Google Safe Browsing “Repeat Offenders” will get a 30-day time-out appeared first on Search Engine Land.

from

http://searchengineland.com/google-safe-browsing-repeat-offenders-will-get-30-day-time-262796

Google retiring Map Maker to speed up the Maps editing process

Company says that new process, using Google Maps app and Google Search, will expedite publication of changes and additions.

The post Google retiring Map Maker to speed up the Maps editing process appeared first on Search Engine Land.

from

http://searchengineland.com/google-retiring-map-maker-speed-maps-editing-process-262779

Google officially drops the Knowledge Graph snippet overlays over low usage

After 2 1/2 years, Google has dropped the search snippet feature.

The post Google officially drops the Knowledge Graph snippet overlays over low usage appeared first on Search Engine Land.

from

http://searchengineland.com/google-officially-drops-knowledge-graph-snippet-overlays-low-usage-262775

SearchCap: Vote says Google, link truths and more

Below is what happened in search today, as reported on Search Engine Land and from other places across the web.

The post SearchCap: Vote says Google, link truths and more appeared first on Search Engine Land.

from

http://searchengineland.com/searchcap-vote-says-google-link-truths-262773

Compare 13 marketing automation platform providers

Virtually every marketing automation platform provides three core capabilities: email marketing, website visitor tracking and a central marketing database. From there, vendors begin to differentiate by providing additional tools — which may be included in the base price or be premium priced — that offer advanced functionality. B2B Marketing Automation Platforms: A Marketer’s Guide examines […]

The post Compare 13 marketing automation platform providers appeared first on Search Engine Land.

from

http://searchengineland.com/compare-13-marketing-automation-platform-providers-262767

I'm With Her

The short version:

The long version: Inside the invisible government: war, propaganda, Clinton & Trump

Or, if you prefer video:

from

http://feedproxy.google.com/~r/seobook/seobook/~3/zDMKQo1j4Qw/im-her

9 hard truths about links

Link building is difficult, and columnist Julie Joyce reminds us that there are no risk-free, fool-proof tactics within this discipline.

The post 9 hard truths about links appeared first on Search Engine Land.

from

http://searchengineland.com/9-hard-truths-links-262106

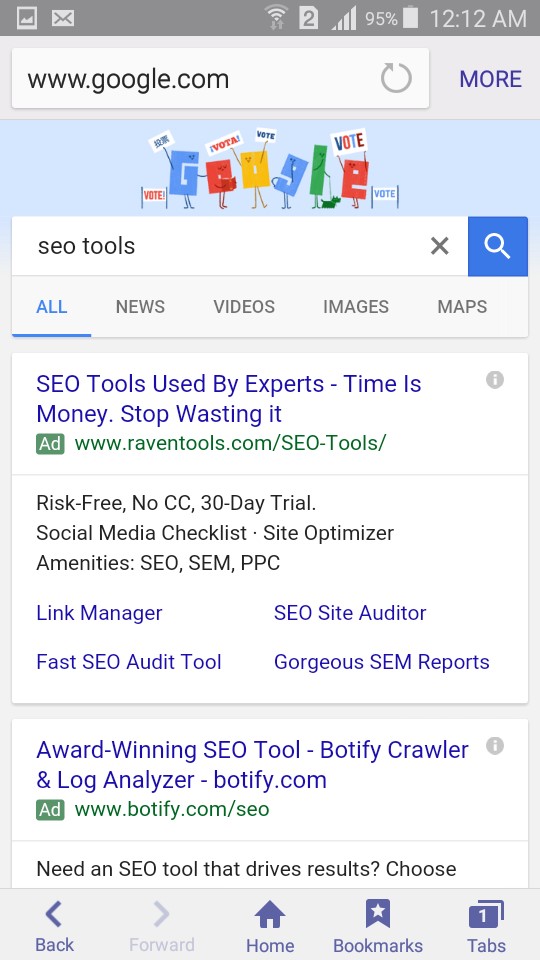

Where do I vote? Election Day Google doodle offers final reminder to vote

Like Sunday's and yesterday's doodles, the animated image leads to a "where do I vote" query and surfaces an interactive tool to find polling locations.

The post Where do I vote? Election Day Google doodle offers final reminder to vote appeared first on Search Engine Land.

from

http://searchengineland.com/vote-election-day-google-doodle-offers-final-reminder-vote-262744

5 Tips to Get Off the Content Marketing Struggle Bus & Create Content Your Audience Will Love

Posted by ronell-smith

(Original image source)

The young man at the back of the ballroom in the Santa Monica, Calif., Loews hotel has a question he's been burning to ask, having held it for more than an hour as I delivered a presentation on why content marketing is invaluable for search.

When the time comes for Q&A, he nearly leaps out of his chair before announcing that he's asking a question for pretty much the entire room.

"How do I know what content I should create?" he asks. "I work at a small company. We have a team of content people, but we're typically told what to write without having any idea if it's what people want to read from us."

When asked what the results of their two blog posts per week was, his answer told a tale I hear I often: "No one reads it. We don't know if that's because of the message or because [it's the] wrong audience for the content we're sharing."

In fishing and hunting circles circles, there's a saying that rings true today, tomorrow, and everyday: "If you want to land trophy animals, you have to hunt in places where trophy animals reside."

Content marketing is not much different.

If you want to ensure that the right audience consumes the content you design, create and share, you have to "hunt" where they are. But to do so successfully, you must first know what they desire in the way of bait (content).

For those of us who've been involved in content marketing for a while now, this all sounds like fairly simplistic, 101-level stuff. But consider this: While we as marketers and technologists have access to sundry tools and platforms that help us discern all sorts of information, most small and mid-size business owners — and the folks who work at small and mid-size businesses — often lack the resources for most of the tools that could help flatten the learning curve for "What content should I create?"

If you spend any time fishing around online, you know very well that the problem isn't going away soon.

Image courtesy of Content Marketing Institute and Marketing Profs

For small and mid-size business looking to tackle this challenge, I detail a few tips below that I frequently share during presentations and that seem to work well for clients and prospects alike.

#1—Find your audience

First, let's get something straight: When it comes to creating content worth sharing and hopefully linking to, the goal is, now and forevermore, to deliver something the audience will love. Even if the topic is boring, your job is to deliver best-in-class content that's uniquely valuable.

Instead of guessing what content you should create for your audience (or would-be audience), take the time to find out where they hang out, both online and offline. Maybe it's Facebook groups, Twitter, forums, discussion groups, or Google Plus (Yes! Google Plus!).

Whether your brand provides HVAC services, computer repair, or custom email templates, there's a community of folks sharing information about it. And these folks, especially the ones in vibrant communities, can help you create amazing content.

As an example, the owner of a small automobile repair business might spend some time reading the most popular blogs in the category, while paying close attention to the information being shared, the top names sharing it, and common complaints, issues, or needs that commonly arise. The key here is to see who the major commenters, sharers, and influencers are, which can easily be gleaned after careful review of the blog comments over time.

From there, she could "follow" those influencers to popular forums and discussion boards, in addition to Facebook groups, Google Communities, and wherever else they congregate and converse.

The keys with regard to this audience research is to find out the following:

- Where they are

- What they share

- What unmet needs they might have

#2—Talk to them

Once you know where and who they are, start interacting with your audience. Maybe it's simply sharing their content on social media while including their "@" alias or answering a question in a group or forum. But over time, they'll come to know and recognize you and are likely to return the favor.

A word of warning is in order: Take off your sales-y hat. This is the time for sincere interaction and engagement, not hawking your wares.

Once you have a rapport with some of the members and/or influencers, don't be shy about asking if you can email them a quick question or two. If they open that door, keep it open with a short, simple note.

With emails of this sort, keep three things in mind:

With emails of this sort, keep three things in mind:

- Be brief

- Be bold

- Be gone

Respect their time — and the fact that you don't have enough currency for much of an ask — by keeping the message short and to the point, while leaving the door open to future communication.

#3—Discern the job to be done

We've all heard the saying: "People don't know what they want until they've seen it."

Whether or not you like the bromide, it certainly rings true in the business world.

Too often a product or service that's supposedly the perfect remedy for some such ailment falls flat, even after focus groups, usability testing, surveys, and customer interviews.

The key is to focus less on what they say and more on what they're attempting to accomplish.

This is where the Jobs To Be Done theory comes in very handy.

Based primarily on the research of Harvard Business School professor Clayton Christensen, Jobs To Be Done (JTBD) is a framework for helping businesses view customers motivations. In a nutshell, it helps us understand what job (why) a customers hires (reads, buys, uses, etc.) our product or service.

Christensen writes...

"Customers rarely make buying decisions around what the 'average' customer in their category may do — but they often buy things because they find themselves with a problem they would like to solve. With an understanding of the 'job' for which customers find themselves 'hiring' a product or service, companies can more accurately develop and market products well-tailored to what customers are already trying to do."

Christensen's latest book provides a thorough picture of the "Jobs To Be Done" theory

One of the best illustrations of the JTBD theory at work is the old saw we hear often in marketing circles: Customers don't buy a quarter-inch drill bit; they buy a quarter-inch hole.

This is important because we must clearly understand what customers are hoping to accomplish before we create content.

For the auto repair company preparing to create a guide for an expensive repair, it would be helpful to learn what workarounds currently exist, who are the people experiencing the problem (i.e., DIYers, Average Joes, technicians, etc.), how much the repair typically costs, and, most important, what the fix allows them to do.

For example, by talking to some of the folks in discussion groups, the business owner might learn that the problem is most common for off-roaders who don't feel comfortable making the expensive repair themselves. Therefore, many of them simply curtail the frequent use of their vehicles off-road.

Armed with this information, she would see that the JTBD is not merely the repair itself, but the ability to get away from work and into the woods on the weekend with their vehicles.

An ideal piece of content would then include the following elements:

- Prevention tips for averting the damage that would cause the repair

- A how-to video tutorial of the repair

- Locations specializing in the repair (hopefully her business is on the list with the most and best reviews)

A piece of content covering the elements above, that contains amazing graphics of folks kicking up dirt off-road with their vehicles, along with interviews of some of those folks as well, should be a winner.

#4—Promote, promote, promote

Now that you've created a winning piece of content, it's time to reach back out the influencer(s) for their help in promoting the content.

First, though, ask if what you've created hits the threshold of incredibly useful and worth sharing. If you get a yes for both, you're in.

The next step is to find out who the additional influencers are who can help you promote and amplify the content.

One simple but effective way to accomplish this is to use BuzzSumo to discern prominent shares of your amplifiers' content. (You'll need to sign up for a free subscription, at least, but the tool is one of the best on the market.)

After you click "View Sharers," you'll be taken to a page that list the folks who've re-shared the amplifier's content. You're specifically looking for folks who've not only shared their content but who (a) commonly share similar content, (b) have a sizable audience that would likely be interested in your content, and (c) might be amenable to sharing your content.

As you continue to cast your net far and wide, a few things to consider include:

- Don't abuse email. Maintain the relationships by offering to help them in return as/more often than you ask for help yourself.

- Share content multiple times via social media. Change the title each time content is shared, and look to determine which platforms work best for a given message, content type, etc.

- Use engagement, interaction, and relationship to inform you of future content pieces. Don't be afraid to ask, "What are some additional ideas you'd be excited to share and link to?"

#5—Review, revise, repeat

The toughest part of content marketing is often understanding that neither success nor failure are final. Even the best content and content promotion efforts can be improved in some way.

What's more, even if your content enjoys otherworldly success, it says nothing about the success or failure of future efforts.

Before you make the commitment to create content, there are two very important elements to adhere to:

1.) Only create content that's in line with your brand's goals. There's lots of good ideas for creating solid content, but many of those ideas won't help your brand. Stick to creating content that in your brand's wheelhouse.

2.) This line of questioning should help you stay on track: "What content can I create that's (a) in line with my core business goals; (b) I'm uniquely qualified to offer; and (c) prospects and customers are hungry for?"

My philosophy of the three Rs:

- Review: Answer the questions "What went right?", "What can we do better?", and "What did we miss that should be covered in the future?"

- Review: You'll need to determine the metrics that matter for your brand before creating content, but whatever they are ensure they're easy to track, attainable, and, most important of all, have real meaning and value.

- Repeat: Successful content marketing efforts occur primarily through repetition. You do something once, learn from it, then improve with the next effort. Remember, the No. 1 reason we have less and less competition each year is many aren't willing to pay the price of doing the little things over and over.

This post is, by no means, an exhaustive plan of what it takes to create effortful content. However, for the vast majority of brands struggling with where to start, it's exactly what the doctor ordered.

Sign up for The Moz Top 10, a semimonthly mailer updating you on the top ten hottest pieces of SEO news, tips, and rad links uncovered by the Moz team. Think of it as your exclusive digest of stuff you don't have time to hunt down but want to read!

from

http://tracking.feedpress.it/link/9375/4757605

Monday, November 7, 2016

SearchCap: Google voting, Google Shopping carousel & Samsung assistant

Below is what happened in search today, as reported on Search Engine Land and from other places across the web.

The post SearchCap: Google voting, Google Shopping carousel & Samsung assistant appeared first on Search Engine Land.

from

http://searchengineland.com/searchcap-google-voting-google-shopping-carousel-samsung-assistant-262718

For e-commerce success: SEO > aesthetics

For those in the online retail space, columnist Julian Connors shares his advice for making your e-commerce website SEO-friendly.

The post For e-commerce success: SEO > aesthetics appeared first on Search Engine Land.

from

http://searchengineland.com/e-commerce-success-seo-aesthetics-261927

[Reminder] Live webcast: It’s Not Too Late! Maximize Your Google Shopping in Time for the Holidays

Whether you’re a beginner or a paid search pro, it’s not too late to improve your Google Shopping campaigns in time for the holidays. Join us for this November 10 webcast and learn how to optimize for search success with Google Shopping ads. Our expert panel will discuss: how to create “quick wins” in your […]

The post [Reminder] Live webcast: It’s Not Too Late! Maximize Your Google Shopping in Time for the Holidays appeared first on Search Engine Land.

from

http://searchengineland.com/reminder-live-webcast-not-late-maximize-google-shopping-time-holidays-262691

Google to add presidential, senatorial & congressional election results directly in search

YouTube will also air live coverage of the elections starting at 7:00 p.m. ET on November 8.

The post Google to add presidential, senatorial & congressional election results directly in search appeared first on Search Engine Land.

from

http://searchengineland.com/google-add-presidential-senatorial-congressional-election-results-directly-search-262673

Why is free money that can be used on local search marketing being left on the table?

Up to $35 billion of co-op funds go unused every year. Wesley Young of the LSA takes a look at trends in the use of co-op funds, challenges and threats arising from digital media and opportunities to make co-op work for you.

The post Why is free money that can be used on local search marketing being left on the table? appeared first on Search Engine Land.

from

http://searchengineland.com/free-money-can-used-local-search-marketing-left-table-262187

Carousels of Google Shopping ads spotted on YouTube

Testing an expansion of Shopping Ads on YouTube.

The post Carousels of Google Shopping ads spotted on YouTube appeared first on Search Engine Land.

from

http://searchengineland.com/google-shopping-ads-carousel-youtube-262662

Holiday optimization tips for remaining competitive in the SERP

Columnist Lydia Jorden discusses ways to stay competitive in local search during the holidays, when competition for positioning gets particularly intense.

The post Holiday optimization tips for remaining competitive in the SERP appeared first on Search Engine Land.

from

http://searchengineland.com/holiday-optimization-tips-remaining-competitive-serp-262178

Will a Viv-enabled Galaxy S8 help Samsung rehabilitate its brand?

Samsung said it will be making more AI acquisitions as it plays catch-up with Google and others.

The post Will a Viv-enabled Galaxy S8 help Samsung rehabilitate its brand? appeared first on Search Engine Land.

from

http://searchengineland.com/will-viv-enabled-galaxy-s8-help-samsung-rehabilitate-brand-262637

Google & Facebook Squeezing Out Partners

Sections

- Just Make Great Content...

- Search Engine Engineering Fear

- Ignore The Eye Candy, It's Poisoned

- Below The Fold = Out Of Mind

- Coercion Which Failed

- Embrace, Extend, Extinguish

- Dumb Pipes, Dumb Partnerships

- "User" Friendly

- The Numbers Can't Work

- Mobile Search Index

- Tracking Users

Just Make Great Content...

Remember the whole shtick about good, legitimate, high-quality content being created for readers without concern for search engines - even as though search engines do not exist?

Whatever happened to that?

We quickly shifted from the above "ideology" to this:

The red triangle/exclamation point icon was arrived at after the Chrome team commissioned research around the world to figure out which symbols alarmed users the most.

Search Engine Engineering Fear

Google is explicitly spreading the message that they are doing testing on how to create maximum fear to try to manipulate & coerce the ecosystem to suit their needs & wants.

At the same time, the Google AMP project is being used as the foundation of effective phishing campaigns.

Scare users off of using HTTP sites AND host phishing campaigns.

Killer job Google.

Someone deserves a raise & some stock options. Unfortunately that person is in the PR team, not the product team.

Ignore The Eye Candy, It's Poisoned

I'd like to tell you that I was preparing the launch of https://amp.secured.mobile.seobook.com but awareness of past ecosystem shifts makes me unwilling to make that move.

I see it as arbitrary hoop jumping not worth the pain.

If you are an undifferentiated publisher without much in the way of original thought, then jumping through the hoops make sense. But if you deeply care about a topic and put a lot of effort into knowing it well, there's no reason to do the arbitrary hoop jumping.

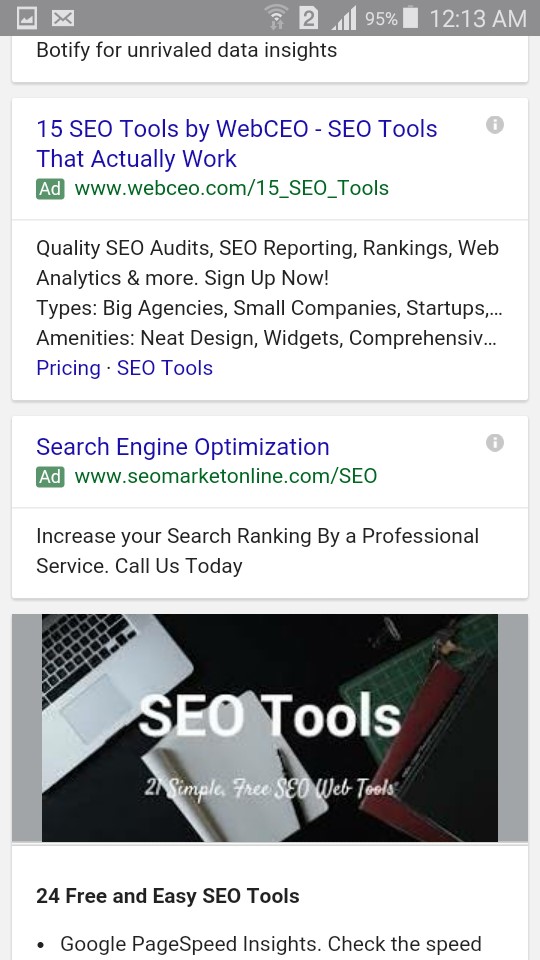

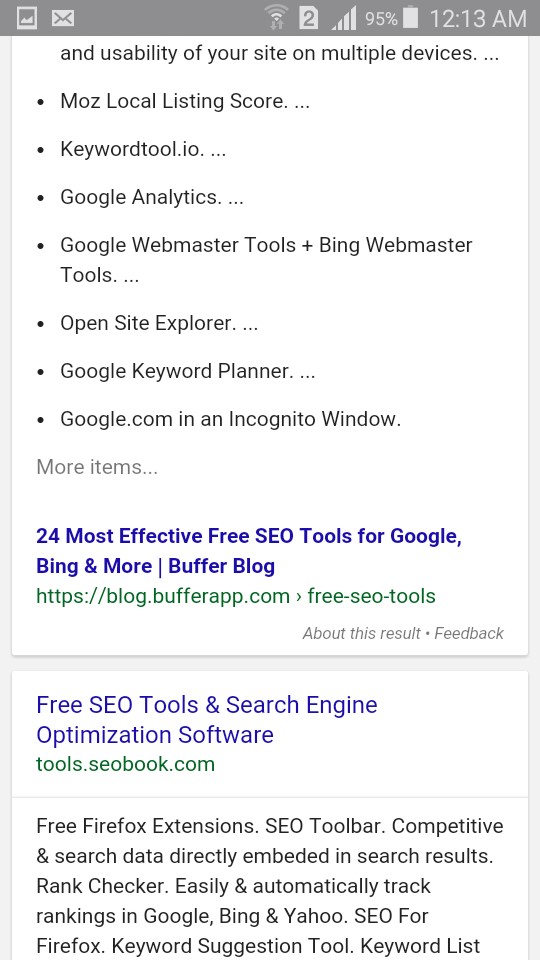

Remember how mobilegeddon was going to be the biggest thing ever? Well I never updated our site layout here & we still outrank a company which raised & spent 10s of millions of dollars for core industry terms like [seo tools].

Though it is also worth noting that after factoring in increased ad load with small screen sizes & the scrape graph featured answer stuff, a #1 ranking no longer gets it done, as we are well below the fold on mobile.

Below the Fold = Out of Mind

In the above example I am not complaining about ranking #5 and wishing I ranked #2, but rather stating that ranking #1 organically has little to no actual value when it is a couple screens down the page.

Google indicated their interstitial penalty might apply to pop ups that appear on scroll, yet Google welcomes itself to installing a toxic enhanced version of the Diggbar at the top of AMP pages, which persistently eats 15% of the screen & can't be dismissed. An attempt to dismiss the bar leads the person back to Google to click on another listing other than your site.

As bad as I may have made mobile search results appear earlier, I was perhaps being a little to kind. Google doesn't even have mass adoption of AMP yet & they already have 4 AdWords ads in their mobile search results AND when you scroll down the page they are testing an ugly "back to top" button which outright blocks a user's view of the organic search results.

What happens when Google suggests what people should read next as an overlay on your content & sells that as an ad unit where if you're lucky you get a tiny taste of the revenues?

Is it worth doing anything that makes your desktop website worse in an attempt to try to rank a little higher on mobile devices?

Given the small screen size of phones & the heavy ad load, the answer is no.

I realize that optimizing a site design for mobile or desktop is not mutually exclusive. But it is an issue we will revisit later on in this post.

Coercion Which Failed

Many people new to SEO likely don't remember the importance of using Google Checkout integration to lower AdWords ad pricing.

You either supported Google Checkout & got about a 10% CTR lift (& thus 10% reduction in click cost) or you failed to adopt it and got priced out of the market on the margin difference.

And if you chose to adopt it, the bad news was you were then spending yet again to undo it when the service was no longer worth running for Google.

How about when Google first started hyping HTTPS & publishers using AdSense saw their ad revenue crash because the ads were no longer anywhere near as relevant.

Oops.

Not like Google cared much, as it is their goal to shift as much of the ad spend as they can onto Google.com & YouTube.

It is not an accident that Google funds an ad blocker which allows ads to stream through on Google.com while leaving ads blocked across the rest of the web.

Android Pay might be worth integrating. But then it also might go away.

It could be like Google's authorship. Hugely important & yet utterly trivial.

Faces help people trust the content.

Then they are distracting visual clutter that need expunged.

Then they once again re-appear but ONLY on the Google Home Service ad units.

They were once again good for users!!!

Neat how that works.

Embrace, Extend, Extinguish

Or it could be like Google Reader. A free service which defunded all competing products & then was shut down because it didn't have a legitimate business model due to it being built explicitly to prevent competition. With the death of Google reader many blogs also slid into irrelevancy.

Their FeedBurner acquisition was icing on the cake.

Techdirt is known for generally being pro-Google & they recently summed up FeedBurner nicely:

Thanks, Google, For Fucking Over A Bunch Of Media Websites - Mike Masnick

Ultimately Google is a horrible business partner.

And they are an even worse one if there is no formal contract.

Dumb Pipes, Dumb Partnerships

They tried their best to force broadband providers to be dumb pipes. At the same time they promoted regulation which will prevent broadband providers from tracking their own users the way that Google does, all the while broadening out Google's privacy policy to allow personally identifiable web tracking across their network. Once Google knew they would retain an indefinite tracking advantage over broadband providers they were free to rescind their (heavily marketed) free tier of Google Fiber & they halted the Google Fiber build out.

When Google routinely acts so anti-competitive & abusive it is no surprise that some of the "standards" they propose go nowhere.

You can only get screwed so many times before you adopt a spirit of ambivalence to the avarice.

Google is the type of "partner" that conducts security opposition research on their leading distribution partner, while conveniently ignoring nearly a billion OTHER Android phones with existing security issues that Google can't be bothered with patching.

Deliberately screwing direct business partners is far worse than coding algorithms which belligerently penalize some competing services all the while ignoring that the payday loan shop funded by Google leverages doorway pages.

"User" Friendly

BackChannel recently published an article foaming at the mouth promoting the excitement of Google's AI:

This 2016-to-2017 Transition is going to move us from systems that are explicitly taught to ones that implicitly learn." ... the engineers might make up a rule to test against—for instance, that “usual” might mean a place within a 10-minute drive that you visited three times in the last six months. “It almost doesn’t matter what it is — just make up some rule,” says Huffman. “The machine learning starts after that.

The part of the article I found most interesting was the following bit:

After three years, Google had a sufficient supply of phonemes that it could begin doing things like voice dictation. So it discontinued the [phone information] service.

Google launches "free" services with an ulterior data motive & then when it suits their needs, they'll shut it off and leave users in the cold.

As Google keeps advancing their AI, what do you think happens to your AMP content they are hosting? How much do they squeeze down on your payout percentage on those pages? How long until the AI is used to recap / rewrite content? What ad revenue do you get when Google offers voice answers pulled from your content but sends you no visitor?

The Numbers Can't Work

A recent Wall Street Journal article highlighting the fast ad revenue growth at Google & Facebook also mentioned how the broader online advertising ecosystem was doing:

Facebook and Google together garnered 68% of spending on U.S. online advertising in the second quarter—accounting for all the growth, Mr. Wieser said. When excluding those two companies, revenue generated by other players in the U.S. digital ad market shrank 5%

The issue is NOT that online advertising has stalled, but rather that Google & Facebook have choked off their partners from tasting any of the revenue growth. This problem will only get worse as mobile grows to a larger share of total online advertising:

By 2018, nearly three-quarters of Google’s net ad revenues worldwide will come from mobile internet ad placements. - eMarketer

Media companies keep trusting these platforms with greater influence over their business & these platforms keep screwing those same businesses repeatedly.

You pay to get likes, but that is no longer enough as edgerank declines. Thanks for adopting Instant Articles, but users would rather see live videos & read posts from their friends. You are welcome to pay once again to advertise to the following you already built. The bigger your audience, the more we will charge you! Oh, and your direct competitors can use people liking your business as an ad targeting group.

Worse yet, Facebook & Google are even partnering on core Internet infrastructure.

In his interview with Obama tonight, @billmaher suggested the news business should be not-for-profit. Mission accomplished, thank Facebook.— Downtown Josh Brown (@ReformedBroker) November 5, 2016

Any hope of AMP turning the corner on the revenue front is a "no go":

“We want to drive the ecosystem forward, but obviously these things don’t happen overnight,” Mr. Gingras said. “The objective of AMP is to have it drive more revenue for publishers than non-AMP pages. We’re not there yet”.

Publishers who are critical of AMP were reluctant to speak publicly about their frustrations, or to remove their AMP content. One executive said he would not comment on the record for fear that Google might “turn some knob that hurts the company.”

Look at that.

Leadership through fear once again.

At least they are consistent.

As more publishers adopt AMP, each publisher in the program will get a smaller share of the overall pie.

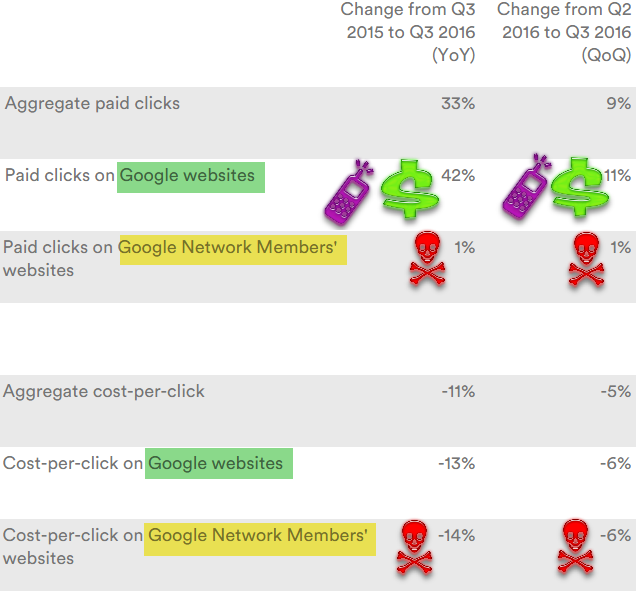

Just look at Google's quarterly results for their current partners. They keep showing Google growing their ad clicks at 20% to 40% while partners oscillate between -15% and +5% quarter after quarter, year after year.

In the past quarter Google grew their ad clicks 42% YoY by pushing a bunch of YouTube auto play video ads, faster search growth in third world markets with cheaper ad prices, driving a bunch of lower quality mobile search ad clicks (with 3 then 4 ads on mobile) & increasing the percent of ad clicks on "own brand" terms (while sending the FTC after anyone who agrees to not cross bid on competitor's brands).

The lower quality video ads & mobile ads in turn drove their average CPC on their sites down 13% YoY.

The partner network is relatively squeezed out on mobile, which makes it shocking to see the partner CPC off more than core Google, with a 14% YoY decline.

What ends up happening is eventually the media outlets get sufficiently defunded to where they are sold for a song to a tech company or an executive at a tech company. Alibaba buying SCMP is akin to Jeff Bezos buying The Washington Post.

The Wall Street Journal recently laid off reporters. The New York Times announced they were cutting back local cultural & crime coverage.

If news organizations of that caliber can't get the numbers to work then the system has failed.

The Guardian is literally incinerating over 5 million pounds per month. ABC is staging fake crime scenes (that's one way to get an exclusive).

The Tribune Company, already through bankruptcy & perhaps the dumbest of the lot, plans to publish thousands of AI assisted auto-play videos in their articles every day. That will guarantee their user experience on their owned & operated sites is worse than just about anywhere else their content gets distributed to, which in turn means they are not only competing against themselves but they are making their own site absolutely redundant & a chore to use.

That the Denver Guardian (an utterly fake paper running fearmongering false stories) goes viral is just icing on the cake.

Look at this brazen, amazing garbage. Facebook has become the world's leading distributor of lies.https://t.co/oueWUiydJO— Matt Pearce (@mattdpearce) November 6, 2016

These tech companies are literally reshaping society & are sucking the life out of the economy, destroying adjacent markets & bulldozing regulatory concerns, all while offloading costs onto everyone else around them.

"We are going to raise taxes on the middle class" -Hillary Clinton #NeverHilla... (Vine by @USAforTrump2016) https://t.co/veEiZnfbkH— JKO (@jko417) November 6, 2016

An FTC report recommended suing Google for their anti-competitive practices, but no suit was brought. The US Copyright Office Register was relieved of her job after she went against Google's views on set top boxes.

And in spite of the growing importance of tech media coverage of the industry is a trainwreck:

This is what it’s like to be a technology reporter in 2016. Freebies are everywhere, but real access is scant. Powerful companies like Facebook and Google are major distributors of journalistic work, meaning newsrooms increasingly rely on tech giants to reach readers, a relationship that’s awkward at best and potentially disastrous at worst.

Being a conduit breeds exclusives. Challenging the grand narrative gets one blackballed.

Mobile Search Index

Google announced they are releasing a mobile first search index:

Although our search index will continue to be a single index of websites and apps, our algorithms will eventually primarily use the mobile version of a site’s content to rank pages from that site, to understand structured data, and to show snippets from those pages in our results. Of course, while our index will be built from mobile documents, we're going to continue to build a great search experience for all users, whether they come from mobile or desktop devices.

There are some forms of content that simply don't work well on a 350 pixel wide screen, unless they use a pinch to zoom format. But using that format is seen as not being mobile friendly.

Imagine you have an auto part database which lists alternate part numbers, price, stock status, nearest store with part in stock, time to delivery, etc. ... it is exceptionally hard to get that information to look good on a mobile device. And good luck if you want to add sorting features on such a table.

The theory that using the desktop version of a page to rank mobile results is flawed because users might find something which is only available on the desktop version of a site is a valid point. BUT, at the same time, a publisher may need to simplify the mobile site & hide data to improve usability on small screens & then only allow certain data to become visible through user interactions. Not showing those automotive part databases to desktop users would ultimately make desktop search results worse for users by leaving huge gaps in the search results. And a search engine choosing to not index the desktop version of a site because there is a mobile version is equally short sighted. Desktop users would no longer be able to find & compare information from those automotive parts databases.

Once again money drives search "relevancy" signals.

Since Google will soon make 3/4 of their ad revenues on mobile that should be the primary view of the web for everyone else & alternate versions of sites which are not mobile friendly should be disappeared from the search index if a crappier lite mobile-friendly version of the page is available.

Amazon converts well on mobile in part because people already trust Amazon & already have an account registered with them. Most other merchants won't be able to convert at anywhere near as well of a rate on mobile as they do on desktop, so if you have to choose between having a mobile friendly version that leaves differentiated aspects hidden or a destkop friendly version that is differentiated & establishes a relationship with the consumer, the deeper & more engaging desktop version is the way to go.

The heavy ad load on mobile search results only further combine with the low conversion rates on mobile to make building a relationship on desktop that much more important.

Even TripAdvisor is struggling to monetize mobile traffic, monetizing it at only about 30% to 33% the rate they monetize desktop & tablet traffic. Google already owns most the profits from that market.

Webmasters are better off NOT going mobile friendly than going mobile friendly in a way that compromises the ability of their desktop site.

Mobile-first: with ONLY a desktop site you'll still be in the results & be findable. Recall how mobilegeddon didn't send anyone to oblivion?— Gary Illyes (@methode) November 6, 2016

I am not the only one suggesting an over-simplified mobile design that carries over to a desktop site is a losing proposition. Consider Nielsen Norman Group's take:

in the current world of responsive design, we’ve seen a trend towards insufficient information density and simplifying sites so that they work well on small screens but suboptimally on big screens.

Tracking Users

Publishers are getting squeezed to subsidize the primary web ad networks. But the narrative is that as cross-device tracking improves some of those benefits will eventually spill back out into the partner network.

I am rather skeptical of that theory.

Facebook already makes 84% of their ad revenue from mobile devices where they have great user data.

They are paying to bring new types of content onto their platform, but they are only just now beginning to get around to test pricing their Audience Network traffic based on quality.

Priorities are based on business goals and objectives.

Both Google & Facebook paid fines & faced public backlash for how they track users. Those tracking programs were considered high priority.

When these ad networks are strong & growing quickly they may be able to take a stand, but when growth slows the stock prices crumble, data security becomes less important during downsizing when morale is shattered & talent flees. Further, creating alternative revenue streams becomes vital "to save the company" even if it means selling user data to dangerous dictators.

The other big risk of such tracking is how data can be used by other parties.

Spooks preferred to use the Google cookie to spy on users. And now Google allows personally identifiable web tracking.

Data is being used in all sorts of crazy ways the central ad networks are utterly unaware of. These crazy policies are not limited to other countries. Buying dog food with your credit card can lead to pet licensing fees. Even cheerful "wellness" programs may come with surprises.

from

http://feedproxy.google.com/~r/seobook/seobook/~3/62Mm_gTDvIQ/securing-fear-and-mobile-monetization

Where do I vote? Google posts Election Day reminder doodle 2 days in a row

The U.S. 2016 Election Day reminder doodle surfaces Google's voter registration tool that helps searchers find local polling locations.

The post Where do I vote? Google posts Election Day reminder doodle 2 days in a row appeared first on Search Engine Land.

from

http://searchengineland.com/vote-google-posts-election-day-reminder-doodle-2-days-row-262629

Can company structure spawn cannibalization? Most definitely.

With so many business structure possibilities, it can be particularly complicated to consolidate content across departments, especially if it’s created in isolation. Internal structure can therefore dictate content efficiency, and decentralized structures can, in turn, spawn duplicate, overlapping or conflicting content. What is cannibalization? Cannibalization is a term coined by the digital community to refer […]

The post Can company structure spawn cannibalization? Most definitely. appeared first on Search Engine Land.

from

http://searchengineland.com/can-company-structure-spawn-cannibalization-definitely-262379

Google's War on Data and the Clickstream Revolution

Posted by rjonesx.

Existential threats to SEO

Rand called "Not Provided" the First Existential Threat to SEO in 2013. While 100% Not Provided was certainly one of the largest and most egregious data grabs by Google, it was part of a long and continued history of Google pulling data sources which benefit search engine optimizers.

A brief history

- Nov 2010 - Deprecate search API

- Oct 2011 - Google begins Not Provided

- Feb 2012 - Sampled data in Google Analytics

- Aug 2013 - Google Keyword Tool closed

- Sep 2013 - Not Provided ramped up

- Feb 2015 - Link Operator degraded

- Jan 2016 - Search API killed

- Mar 2016 - Google ends Toolbar PageRank

- Aug 2016 - Keyword Planner restricted to paid

I don't intend to say that Google made any of these decisions specifically to harm SEOs, but that the decisions did harm SEO is inarguable. In our industry, like many others, data is power. Without access to SERP, keyword, and analytics data, our and our industry's collective judgement is clouded. A recent survey of SEOs showed that data is more important to them than ever, despite these data retractions.

So how do we proceed in a world in which we need data more and more but our access is steadily restricted by the powers that be? Perhaps we have an answer — clickstream data.

What is clickstream data?

First, let's give a quick definition of clickstream data to those who are not yet familiar. The most straightforward definition I've seen is:

"The process of collecting, analyzing, and reporting aggregate data about which pages users visit in what order."

– (TechTarget: What is Clickstream Analysis)

If you've spent any time analyzing your funnel or looking at how users move through your site, you have utilized clickstream data in performing clickstream analysis. However, traditionally, clickstream data is restricted to sites you own. But what if we could see how users behave across the web — not just our own sites? What keywords they search, what pages they visit, and how they navigate the web? With that data, we could begin to fill in the data gaps previously lost to Google.

I think it's worthwhile to point out the concerns presented by clickstream data. As a webmaster, you must be thoughtful about what you do with user data. You have access to the referrers which brought visitors to your site, you know what they click on, you might even have usernames, emails, and passwords. In the same manner, being vigilant about anonymizing data and excluding personally identifiable information (PII) has to be the first priority in using clickstream data. Moz and our partners remain vigilant, including our latest partner Jumpshot, whose algorithms for removing PII are industry-leading.

What can we do?

So let's have some fun, shall we? Let's start to talk about all the great things we can do with clickstream data. Below, I'll outline a half dozen or so insights we've gleaned from clickstream data that are relevant to search marketers and Internet users in general. First, let me give credit where credit is due — the data for these insights have come from 2 excellent partners: Clickstre.am and Jumpshot.

Popping the filter bubble

It isn't very often that the interests of search engine marketers and social scientists intersect, so this is a rare opportunity for me to blend my career with my formal education. Search engines like Google personalize results in a number of ways. We regularly see personalization of search results in the form of geolocation, previous sites visited, or even SERP features tailored to things Google knows about us as users. One question posed by social scientists is whether this personalization creates a filter bubble, where users only see information relative to their interests. Of particular concern is whether this filter bubble could influence important informational queries like those related to political candidates. Does Google show uniform results for political candidate queries, or do they show you the results you want to see based on their personalization models?

Well, with clickstream data we can answer this question quite clearly by looking at the number of unique URLs which users click on from a SERP. Personalized keywords should result in a higher number of unique URLs clicked, as users see different URLs from one another. We randomly selected 50 search-click pairs (a searched keyword and the URL the user clicked on) for the following keywords to get an idea of how personalized the SERPs were.

- Dropbox - 10

- Google - 12

- Donald Trump - 14

- Hillary Clinton - 14

- Facebook - 15

- Note 7 - 16

- Heart Disease - 16

- Banks Near Me - 107

- Landscaping Company - 260

As you can see, a highly personalized keyword like "banks near me" or "landscaping company" — which are dependent upon location —receive a large number of unique URLs clicked. This is to be expected and validates the model to a degree. However, candidate names like "Hillary Clinton" and "Donald Trump" are personalized no more than major brands like Dropbox, Google, or Facebook and products like the Samsung Note 7. It appears that the hypothetical filter bubble has burst — most users see the exact same results as one another.

Biased search behavior

But is that all we need to ask? Can we learn more about the political behavior of users online? It turns out we can. One of the truly interesting features of clickstream data is the ability to do "also-searched" analysis. We can look at clickstream data and determine whether or not a person or group of people are more likely to search for one phrase or another after first searching for a particular phrase. We dove into the clickstream data to see if there were any material differences between subsequent searches of individuals who looked for "donald trump" and "hillary clinton," respectively. While the majority of the searches were quite the same, as you would expect, searching for things like "youtube" or "facebook," there were some very interesting differences.

For example, individuals who searched for "donald trump" were 2x as likely to then go on to search for "Omar Mateen" than individuals who previously searched for "hillary clinton." Omar Mateen was the Orlando shooter. Individuals who searched for "Hillary Clinton" were about 60% more likely to search for "Philando Castile," the victim of a police shooting and, in particular, one of the more egregious examples. So it seems — at least from this early evidence —that people carry their biases to the search engines, rather than search engines pushing bias back upon them.

Getting a real click-through rate model

Search marketers have been looking at click-through rate (CTR) models since the beginning of our craft, trying to predict traffic and earnings under a set of assumptions that have all but disappeared since the days of 10 blue links. With the advent of SERP features like answer boxes, the knowledge graph, and Twitter feeds in the search results, it has been hard to garner exactly what level of traffic we would derive from any given position.

With clickstream data, we have a path to uncovering those mysteries. For starters, the click-through rate curve is dead. Sorry folks, but it has been for quite some time and any allegiance to it should be categorized as willful neglect.

We have to begin building somewhere, so at Moz we start with opportunity metrics (like the one introduced by Dr. Pete, which can be found in Keyword Explorer) which depreciate the potential search traffic available from a keyword based on the presence of SERP features. We can use clickstream data to learn the non-linear relationship between SERP features and CTR, which is often counter-intuitive.

Let's take a quick quiz.

Which SERP has the highest organic click-through rate?

- A SERP with just news

- A SERP with just top ads

- A SERP with sitelinks, knowledge panel, tweets, and ads at the top

Strangely enough, it's the last that has the highest click-through rate to organic. Why? It turns out that the only queries that get that bizarre combination of SERP features are for important brands, like Louis Vuitton or BMW. Subsequently, nearly 100% of the click traffic goes to the #1 sitelink, which is the brand website.

Perhaps even more strangely, pages with top ads deliver more organic clicks than those with just news. News tends to entice users more than advertisements.

It would be nearly impossible to come to these revelations without clickstream data, but now we can use the data to find the unique relationships between SERP features and click-through rates.

In production: Better volume data

Perhaps Moz's most well-known usage of clickstream data is our volume metric in Keyword Explorer. There has been a long history of search marketers using Google's keyword volume as a metric to predict traffic and prioritize keywords. While (not provided) hit SEOs the hardest, it seems like the recent Google Keyword Planner ranges are taking a toll as well.

So how do we address this with clickstream data? Unfortunately, it isn't as cut-and-dry as simply replacing Google's data with Jumpshot or a 3rd party provider. There are several steps involved — here are just a few.

- Data ingestion and clean-up

- Bias removal

- Modeling against Google Volume

- Disambiguation corrections

I can't stress how much attention to detail needs to go into these steps in order to make sure you're adding value with clickstream data rather than simply muddling things further. But I can say with confidence that our complex solutions have had a profoundly positive impact on the data we provide. Let me give you some disambiguation examples that were recently uncovered by our model.

| Keyword | Google Value | Disambiguated |

| cars part | 135000 | 2900 |

| chopsuey | 74000 | 4400 |

| treatment for mononucleosis | 4400 | 720 |

| lorton va | 9900 | 8100 |

| definition of customer service | 2400 | 1300 |

| marion county detention center | 5400 | 4400 |

| smoke again lyrics | 1900 | 880 |

| should i get a phd | 480 | 320 |

| oakley crosshair 2.0 | 1000 | 480 |

| barter 6 download | 4400 | 590 |

| how to build a shoe rack | 880 | 720 |

Look at the huge discrepancies here for the keyword "cars part." Most people search for "car parts" or "car part," but Google groups together the keyword "cars part," giving it a ridiculously high search value. We were able to use clickstream data to dramatically lower that number.

The same is true for "chopsuey." Most people search for it, correctly, as two separate words: "chop suey."

These corrections to Google search volume data are essential to make accurate, informed decisions about what content to create and how to properly optimize it. Without clickstream data on our side, we would be grossly misled, especially in aggregate data.

How much does this actually impact Google search volume? Roughly 25% of all keywords we process from Google data are corrected by clickstream data. This means tens of millions of keywords monthly.

Moving forward

The big question for marketers is now not only how do we respond to losses in data, but how do we prepare for future losses? A quick survey of SEOs revealed some of their future concerns...

Luckily, a blended model of crawled and clickstream data allows Moz to uniquely manage these types of losses. SERP and suggest data are all available through clickstream sources, piggybacking on real results rather than performing automated ones. Link data is already available through third-party indexes like MozScape, but can be improved even further with clickstream data that reveals the true popularity of individual links. All that being said, the future looks bright for this new blended data model, and we look forward to delivering upon its promises in the months and years to come.

And finally, a question for you...

As Moz continues to improve upon Keyword Explorer, we want to make that data more easily accessible to you. We hope to soon offer you an API, which will bring this data directly to you and your apps so that you can do more research than ever before. But we need your help in tailoring this API to your needs. If you have a moment, please answer this survey so we can piece together something that provides just what you need.

Sign up for The Moz Top 10, a semimonthly mailer updating you on the top ten hottest pieces of SEO news, tips, and rad links uncovered by the Moz team. Think of it as your exclusive digest of stuff you don't have time to hunt down but want to read!

from

http://tracking.feedpress.it/link/9375/4752463

Friday, November 4, 2016

SearchCap: Google mobile index, back to top button & a Doodle

Below is what happened in search today, as reported on Search Engine Land and from other places across the web.

The post SearchCap: Google mobile index, back to top button & a Doodle appeared first on Search Engine Land.

from

http://searchengineland.com/searchcap-google-mobile-index-back-top-button-doodle-262606

Get the most from your social media campaigns this holiday shopping season

How can social media help your business capitalize on the holiday season and see a major revenue bump? In this white paper, G/O Digital has laid out the best social media insights to allow for complete optimization of your holiday marketing campaign. You’ll learn: how shoppers engage with content on Facebook, Instagram and Twitter; which […]

The post Get the most from your social media campaigns this holiday shopping season appeared first on Search Engine Land.

from

http://searchengineland.com/get-social-media-campaigns-holiday-shopping-season-262594

Google begins mobile-first indexing, using mobile content for all search rankings

While called an 'experiment,' it’s actually the first move in Google's planned shift to looking primarily at mobile content, rather than desktop, when deciding how to rank results.

The post Google begins mobile-first indexing, using mobile content for all search rankings appeared first on Search Engine Land.

from

http://searchengineland.com/google-begins-experimenting-mobile-first-index-hopes-expand-upcoming-months-262527

Meet a Landy Award winner: Noble Studios drives traffic to Tahoe South to win Best SEO Initiative for Small Business

Noble Studios introduced a new approach to blogging and content creation for Tahoe South that resulted in a 134% increase in mobile site traffic.

The post Meet a Landy Award winner: Noble Studios drives traffic to Tahoe South to win Best SEO Initiative for Small Business appeared first on Search Engine Land.

from

http://searchengineland.com/meet-landy-award-winner-noble-studios-drives-traffic-tahoe-south-win-best-seo-initiative-small-business-262558

Successful SEO programs require content that supports the entire buy cycle

Columnist Joe Goers argues that many websites are focusing too much on the bottom of the funnel -- to the detriment of their SEO success.

The post Successful SEO programs require content that supports the entire buy cycle appeared first on Search Engine Land.

from

http://searchengineland.com/successful-seo-programs-require-content-supports-entire-buy-cycle-261990

Search in Pics: SEO Halloween, Google bomb, burger & basketball

In this week’s Search In Pictures, here are the latest images culled from the web, showing what people eat at the search engine companies, how they play, who they meet, where they speak, what toys they have and more. Google indoor basketball: Source: Instagram A Google nuclear bomb? Source: Instagram A branded Google burger meal: […]

The post Search in Pics: SEO Halloween, Google bomb, burger & basketball appeared first on Search Engine Land.

from

http://searchengineland.com/search-pics-seo-halloween-google-bomb-burger-basketball-262575

Google tests a ‘back to top’ button in the mobile search interface

In a new user interface test, Google shows a button to jump the mobile searcher back to the top of the search results page.

The post Google tests a ‘back to top’ button in the mobile search interface appeared first on Search Engine Land.

from

http://searchengineland.com/google-tests-back-top-button-mobile-search-interface-262553

Walter Cronkite Google doodle marks 100th birthday of America’s favorite journalist

Cronkite became known as 'the most trusted man in America' during his career, reporting on the events that shaped our world.

The post Walter Cronkite Google doodle marks 100th birthday of America’s favorite journalist appeared first on Search Engine Land.

from

http://searchengineland.com/walter-cronkite-google-doodle-marks-100th-birthday-americas-favorite-journalist-262542

How Can Small Businesses/Websites Compete with Big Players in SEO? - Whiteboard Friday

Posted by randfish

It may seem like an impossible uphill battle to compete with big sites in the SERPs, but there are benefits to running a smaller site that can make a tremendous difference to your SEO. In today's Whiteboard Friday, Rand explains how small businesses and websites can target opportunities the big sites can't, in spite of their natural advantages.

Click on the whiteboard image above to open a high-resolution version in a new tab!

Video Transcription

Howdy, Moz fans, and welcome to another edition of Whiteboard Friday. This week we're going to chat about how you, as a small site, could compete against big sites.

Big site advantages

- Domain authority

- Quantity and diversity of the links that are coming to them, which bias engines to generally rank their content higher than they ordinarily might if it were on a brand-new site or a smaller site that they didn't recognize.

- Trustworthiness. They've built brand associations in the space through advertising and through their size and scale and their reputation over time and over years that means that people have these biases towards trusting that brand, liking that brand, buying from that brand.

- Financial resources that likely you are not going to have as a small website. If we're talking about Expedia here versus randstravels.com, they have tens if not hundreds of millions of dollars that they can put towards their web marketing efforts and their SEO efforts, and I have, well, my bad self.

- Ability to invest if and usually not just if, but if and when, if and when something is a major priority. If it's not the case that something is a major priority, then Expedia is probably not going to invest in that, and this is where a lot of your advantages come from.

Small site advantages

- Nimbleness. You can choose to say, "Here are all the things we could be investing in right now, and you know what, this is the highest priority right now," and a week later decide this is no longer the highest priority. We're going to change direction and go pursue this instead. You don't have to check with a manager or a team or a boss. You don't have three layers of management that you have to run that approval process through. You can be extremely nimble. Small teams can get remarkable amounts done in small amounts of time compared to much larger teams.

- Creativity. You are allowed to go outside the boundaries of what's been set. If you have an idea, you can execute on it. If you have an idea at Expedia, you need to get a lot of approval before you can go after it, and you better make sure that all of the rest of your work is done, too.

- Focus. As a small business, you can choose to focus your web marketing efforts on one specific thing. So if you know that SEO is where all of your opportunity lies, you can ignore your other web marketing channels, you can ignore retargeting for a few weeks, you can ignore your PPC accounts for a few weeks and simply focus on SEO. At Expedia, a marketing manager is going to have a long list of things that they need to do that they are responsible for, and they can't simply ignore all their duties to focus on something new.

- Niche appeal. So yes, Expedia built up their brand around travel, and they have associations around hotels and flights and bookings and all this kind of stuff. But you can choose to take a small slice of those for your particular business and say, "We're going to focus exclusively on this, and we're going to become the authority in this particular niche," which gives you a bunch of advantages that we'll talk about.

- Authenticity on your side. So a big brand will often have big brand associations. A smaller brand can build very strong positive associations with, granted, a smaller audience, but you don't need to monetize as many or as fast or as directly as a big brand needs to. You can concentrate on building your brand's appeal to your very specific niche. If you monetize them well enough over time, you can build a great business, a small business but a great small business.

5 ways to compete

So, five ways to compete.

1. Target keywords the big sites are unwilling, unable, or so far aren't trying to compete on.

First off let's talk about keywords. So in the SEO keyword universe, there are going to be keywords that a big brand, like in this example Expedia, is unwilling, unable, or has chosen not to target yet because they have an indirect path to ROI or legal issues or PR issues. Those can be things like:

- Long-tail keywords. So maybe Expedia is definitely targeting something like "Istanbul city guide," but they are definitely not targeting something like "best shops to visit in Istanbul's Grand Bazaar." By the way, I looked that up, and I could not find a great list. So if someone wants to make a list of those, that would be real handy because the Grand Bazaar, very hard to find things.

- Comparison keywords. So Expedia can't go after their competitors' brand names, and they certainly wouldn't choose generally to compare themselves to another brand. So Venere flights versus Expedia flights, they're just not going to have a page on that. But you can have a page on that, and you can compare those things to each other. That's an advantage that a small website is going to have over a larger one.

- Editorial keywords. So Expedia has business relationships with a lot of different hotels. Therefore, it is not in their interest to rank hotels in a particular locale from 1 to 10 or from 1 to 100. As a small website, you don't have that constraint, and you can go after those types of keywords that your bigger competitors bias against doing, and that can be very powerful as well.

2. Aim for authority and brand association in a very specific niche

So like we talked about, Expedia is focused on travel. But Rand's Travels can focus on city-specific itineraries or ranking travel destinations or some other thin slice of a niche that Expedia can't build that same brand equity in.

3. Pursue indirect/harder-to-monetize content

So Expedia knows that they're generally pursuing not just keywords, but content that helps people buy directly from Expedia, and they're going to be looking at that path to conversion. But you might say, "I don't care if it takes three visits or four visits or five visits for someone to convert. I want to build trust. I want to build authority in my niche. Therefore, I can go after content that Expedia would not go after." They might be hotels, flights, cars, and cities. You might be recommended websites and travel education and news and tactics and tips and neighborhoods.

4. Go deeper and provide more value with content than what your big competition can afford to scale

You can invest more in a single piece of content than Expedia or a big brand ever could. So when you take your small niche and you say this keyword or this set of keywords is extremely important to me. This search intent is extremely important. I'm going to create 10x content. I'm going to put 10 times more effort and energy and resources into building that than what my big brand competitor can do. If they are a two-star resource, I'm going to be a five-star resource.

5. Build relationships 1-on-1 that big competition will never invest in

In addition to that element of building better content, you can also build better, more direct relationships with the people you need those relationships with. So Expedia goes through their PR team, and they have their teams of folks that do their relationships. But you can go direct. You can say, "I'm Rand's Travels. I'm going to go meet with people in Istanbul while I'm there and forge those relationships personally and build those relationships up on social media and have conversations and leave blog comments, and that will reinforce my authenticity and my niche appeal."

That's a huge advantage as well, and that can help to amplify the reach of your content and to get you visibility on these keywords and this content that your competitors simply can't touch because they're too big. They need to do this stuff at scale. When you need to do things at scale, you simply can't focus in the same way, and that's where your big advantages come from as a small website.

Now, looking forward to our comments and hearing more from you about how you've been able to compete against the big guys, and we'll see you again next week for another edition of Whiteboard Friday. Take care.

Video transcription by Speechpad.com

Sign up for The Moz Top 10, a semimonthly mailer updating you on the top ten hottest pieces of SEO news, tips, and rad links uncovered by the Moz team. Think of it as your exclusive digest of stuff you don't have time to hunt down but want to read!

from

http://tracking.feedpress.it/link/9375/4738114

Thursday, November 3, 2016

SearchCap: Google Home, Possum changes & expanded text ads

Below is what happened in search today, as reported on Search Engine Land and from other places across the web.

The post SearchCap: Google Home, Possum changes & expanded text ads appeared first on Search Engine Land.

from

http://searchengineland.com/searchcap-google-home-possum-changes-expanded-text-ads-262525

Expanded text ads that kick butt

Wondering how to write great ads that convert in the age of expanded text ads? Columnist Mona Elesseily shares her tips and observations.

The post Expanded text ads that kick butt appeared first on Search Engine Land.

from

http://searchengineland.com/expanded-text-ads-that-kick-butt-262179

Introducing Open Garden

A new movement in marketing is growing — and you’re probably starting to hear about it in the media, at events and in the boardroom. Open Garden is a blueprint for a marketing and advertising ecosystem that’s connected at the data layer. It’s a simple, transformative idea. By building your own Open Garden, you can […]

The post Introducing Open Garden appeared first on Search Engine Land.

from

http://searchengineland.com/introducing-open-garden-262411

Study shows Google’s Possum update changed 64% of local SERPs

How significantly did the Possum update impact local search results in Google? Columnist Joy Hawkins shares data and insights from a study she did with BrightLocal, which compared local results before and after the update.

The post Study shows Google’s Possum update changed 64% of local SERPs appeared first on Search Engine Land.

from

http://searchengineland.com/study-shows-googles-possum-update-changed-64-local-serps-261761

Meet a Landy Award winner: For its work with Lane Bryant, Razorfish wins Best Mobile SEM & Best Retail SEM Initiatives

Razorfish didn't miss a beat when shifting to a mobile-first, omnichannel strategy for the retail clothing chain.

The post Meet a Landy Award winner: For its work with Lane Bryant, Razorfish wins Best Mobile SEM & Best Retail SEM Initiatives appeared first on Search Engine Land.

from

http://searchengineland.com/meet-a-landy-award-winner-razorfish-for-lane-bryant-wins-best-mobile-sem-best-retail-sem-initiatives-261996

Google Home bests Amazon Echo & Alexa, for answering questions

If you've always wanted someone around the house to answer all the questions you have during the course of your day, that someone is a something -- Google Home.

The post Google Home bests Amazon Echo & Alexa, for answering questions appeared first on Search Engine Land.

from

http://searchengineland.com/google-home-amazon-echo-262438

How Google Home turns voice answers into clickable links

It turns out Google's new hands-free voice-based assistant has a way to let users click to the answers it gets from publishers. That's via the companion app.

The post How Google Home turns voice answers into clickable links appeared first on Search Engine Land.

from

http://searchengineland.com/google-home-voice-answers-clickable-links-262462

Wednesday, November 2, 2016

SearchCap: Yandex Palekh algorithm, Yext reviews & more search

Below is what happened in search today, as reported on Search Engine Land and from other places across the web.

The post SearchCap: Yandex Palekh algorithm, Yext reviews & more search appeared first on Search Engine Land.

from

http://searchengineland.com/searchcap-yandex-palekh-algorithm-yext-reviews-search-262419

Opinion: Google is biased toward reputation-damaging content

Why does reputation-damaging content seem to appear so quickly in Google’s search results? Columnist Chris Silver Smith outlines his theory on why Google's algorithm can give negative content greater ranking ability than it deserves.

The post Opinion: Google is biased toward reputation-damaging content appeared first on Search Engine Land.

from

http://searchengineland.com/opinion-google-biased-toward-reputation-damaging-content-262212

Yext Reviews product is ‘pre-optimized’ for Schema, balances reviews across sites

Company also says it has gained Yelp's OK with the review generation aspect of the product.

The post Yext Reviews product is ‘pre-optimized’ for Schema, balances reviews across sites appeared first on Search Engine Land.

from

http://searchengineland.com/yext-reviews-product-pre-optimized-schema-balances-reviews-across-sites-262363